The capabilities of large language models (LLMs) have experienced remarkable advancements in recent years. These sophisticated AI-driven tools, functioning as deep learning artificial neural networks, have undergone substantial refinement in their abilities. Through extensive training on vast datasets, LLMs have evolved to effectively leverage billions of parameters, enabling them to excel in various natural language processing (NLP) tasks.

Nonetheless, LLMs come with certain drawbacks. A significant challenge lies in their high cost for training and operation, owing to their demand for substantial computing power. Moreover, LLMs may occasionally produce biased or offensive text, presenting ethical concerns. The large dataset they’ve trained also increases risks of inaccuracy or unintended behavior. Consequently, there is an emerging interest in Small Language Models (SLM)s. While not as potent as LLMs, SLMs offer cost-efficiency in training and operation. Furthermore, they are less prone to generating biased or offensive content, addressing ethical considerations more effectively.

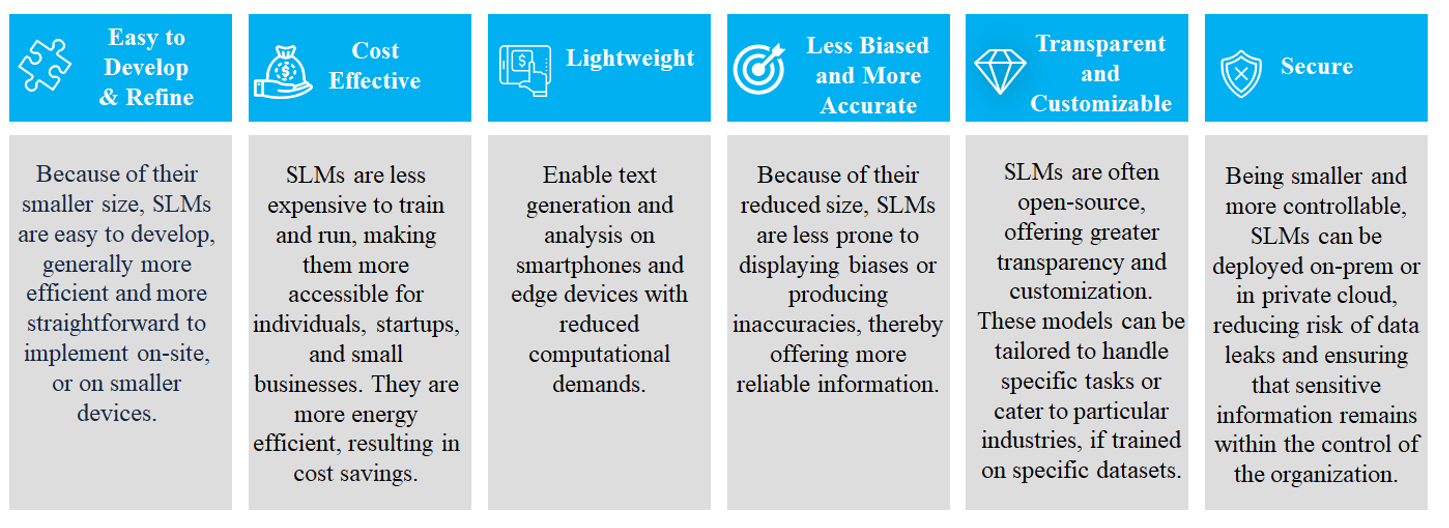

Small language models are scaled-down versions of larger AI models like GPT-4 or Gemini Ultra. They are designed to perform similar tasks—understanding and generating human-like text—but with fewer parameters. Subsequently, they are cheaper and more environmentally responsible. Their more limited scope and data make them better suited and customizable for focused business use cases like chat, text search/analytics, and targeted content generation. Here are several significant features contributing to the increasing popularity of small language models.

In numerous business scenarios, SLMs strike a crucial balance between capability and control, offering a practical solution for responsible AI integration. As organizational leaders seek to enhance operations with AI, SLMs present themselves as a viable option worthy of consideration, aligning performance with the imperative of responsible usage. The difference between SLMs and LLMs have been highlighted in the following table.

The market for SLMs, is poised for significant growth, driven by their rising popularity. According to Valuate Research, the global market for SLMs was valued at $5.9 billion in 2023 and is projected to soar to $16.9 billion by 2029, reflecting a robust CAGR of 18% during this period.

SLMs can generate high-quality output, especially in specific domains where they have been fine-tuned. For example, SLMs’ compact size and efficient computation make them well-suited for deployment on edge devices, mobile applications, and resource-constrained environments as well., Apple has recently released eight small AI language models intended for use on smartphones and other devices as proof-of-concept research releases, made available under Apple’s open source-like Sample Code License. In general, these models can be tuned in domains such as:

At Blackstraw, we recognize the transformative potential of SLMs and are committed to assisting businesses in unlocking their full capabilities. Drawing on our expertise in AI, specifically in NLP, computer vision, and machine learning, we have empowered numerous clients through a range of applications including text generation, summarization, language translation, sentiment analysis, text classification, and information retrieval across diverse industries. We have utilized our proficiency in customizing SLMs to support our global customers, in various ways including:

Whether you seek to streamline operations, enhance decision-making processes, or generate personalized content at scale, our tailored AI solutions are crafted to address your specific needs and objectives. Partner with Blackstraw to embark on a journey of innovation and growth, driven by the boundless potential of SLMs.